This is a remake of my original cartoon which was published at SDTimes, N.Y. in their newsletter as of April 1, 2008

Wednesday, November 29, 2023

Software made on Earth

Sunday, November 5, 2023

Testing under the Hood

Even

when a particular test passed at first glance, there might still be

things going wrong. You may just not have noticed it, because the User

Interface stays quiet; at least for the moment.

Things can go wrong in a black box long after you executed the test;

hours, days or even weeks later. The longer such problems remain

undetected the more effort it takes to fix the problem and repair the damage it

caused, especially if the system is already LIVE

in production. See also Cheerful Debugging Messages and its Consequences in this blog.

Even

when a particular test passed at first glance, there might still be

things going wrong. You may just not have noticed it, because the User

Interface stays quiet; at least for the moment.

Things can go wrong in a black box long after you executed the test;

hours, days or even weeks later. The longer such problems remain

undetected the more effort it takes to fix the problem and repair the damage it

caused, especially if the system is already LIVE

in production. See also Cheerful Debugging Messages and its Consequences in this blog.

It is not enough to look only at the front-end of an application. You should also watch carefully what’s going on behind the curtains. Give all testers a facility to query the underlying database. A lot of things can go wrong there and remain undetected for too long. It will start hurting only when such data is shared with or passed to other programs using a corresponding interface to read or exchange data. I have seen a lot of things stored inappropriate only to hurt when such data was later used by another program.

I developed an SQL query tool with some extra facilities like an analyser to compare all tables before and after a triggered action.

How can testers live without such tools? It opens a whole new universe of potential problems just waiting to get reported.

Wednesday, September 27, 2023

Revise the Test Report

I thought, I'd published this cartoon in 2020 already, but couldn't find it, so I am doing it now, with a 3 years delay...=;O)

I thought, I'd published this cartoon in 2020 already, but couldn't find it, so I am doing it now, with a 3 years delay...=;O)funny though, the cartoon is as relevant today as it was when I originally created it. History repeats itself.

Tuesday, August 15, 2023

Mutation Testing and why we don't need it, or do we?

When our kids were still small, every Easter, it was a tradition to hide chocolate eggs, sweets and small presents in the garden, around the house, at the carport and sometimes also within the house.

When our kids were still small, every Easter, it was a tradition to hide chocolate eggs, sweets and small presents in the garden, around the house, at the carport and sometimes also within the house.

While the kids were so excited to find all the little things, we parents watched them equally excited.

When Easter was long over, often, one or the other egg was still found by accident in a corner or somewhere in a plant pot; too old to still be edible. In other words, our kids didn't track down all of them at Easter. Over the years, they got better and better. We had to be more creative finding new extraordinary places to hide the little things from them, so they didn't have an easy catch ("low hanging fruits" how testers would say).

While we never spent a thought about our kids' "mathematical" effectiveness of finding all these little presents, this is exactly what mutation testing is all about.

It is a method to measure the effectiveness of unit tests in detecting anomalies in the code. The idea is to inject bugs by purpose and then verify how many of these are found. That's pretty much the same like hiding chocolate eggs in the garden.

A typical example of injected bugs (mutants) could be the change of a comparison operator from something like (x<y) to (x>y) or a boolean value that is changed from an initial value true to false or vice versa. In case of a calculation engine, the computed value could be fuzzed and made return an incorrect result. The point is, that these bugs are implemented by purpose and - in contrary to our annual tradition at Easter - the tool that modifies the code knows exactly how many mutants were added and where.

When executing a unit test, the mutation test tool compares the number of failed tests with and without the modified code. If the number of failed tests is the same for both scenarios, then this is an indication of inadequate tests.

I am not experienced in automated mutation testing, but I find this topic quite interesting, especially because IT-companies tend to measure just the test coverage but often, have no idea whether their unit-tests are really effective. Test Coverage doesn't tell you anything about the quality of the code. You can have 100% test coverage for one method and still fail miserably with uncaught exceptions by applying other valid inputs to the method.

Although mutation testing is usually done as part of automated tests using corresponding plug-ins, you can do mutation testing also manually. When I was drawing the cartoon, I was more focused on the manual aspect and less about the potential of using it to test existing automated tests.

Let's go a few steps back and look at our today's approach. We have a lot of manual tests (>1000), we also have a lot of unit tests (> 20'000), a very effective API test suite (> 3000 tests) and also a few UI tests (ca.100), following the typical test automation pyramid in terms of distribution of the tests, but we haven't integrated any sort of mutation testing yet.

I get emailed automatically whenever our testers find defects either through manual testing and/or by findings in the automated UI test-scripts and/or automated API tests. Based on the amount of emails received daily, I draw the conclusion that we are an effective test-team finding many defects. But, of course, it would be more interesting to learn whether we could even do any better. Are there even more bugs around to catch? Honestly, with the current amount of anomalies reported by my testers, my first reaction was rather defensive. Why I should inject any additional bugs by purpose? We have already enough to do while analyzing all the findings that slipped into the code unintentionally. This was also the original idea behind the cartoon, but..here is my mistake:

We have no facts at hand but simply a certain amount of defects we raise every week.

Mutation Testing could help us collect more facts. Mutation Testing can not only be applied through tools, it can also be done manually. For example, if you want to understand how long it takes to find out a certain (obvious) bug introduced by purpose, just add it and let's see. You don't even need to inject code, you can also change a configuration that leads to a different (unexpected) behavior.

For example, one of my tested software creates documents with inquiries to doctors. A configuration allows the documents to be fit with a data-matrix code on pages the doctors have to fill out and return. When the letters are returned with the data-matrix code on it, a software-component can automatically identify the original request and related patient, then map it to the answer received. This enables quick access to both, original request-letter and response.

The configuration could be turned off (by purpose), causing the created letters being sent out without a data-matrix code. How long do you think will it take until our testers notice the missing data-matrix code on the letters?I am pretty sure, it won't take long, because such a test is well documented in the regression test suite. But, what if we challenge them more - like making the letters print a hard-coded data-matrix code that is the same for all letters?

It takes more efforts for a tester to find the problem.

If the test is not documented, it is likely for the testers to miss the bug. If it is documented, it may still depend on the priority set for the test case whether the test is executed at all. If testers are all too confident that this piece is likely not to fail, they won't test it either.

If you inject such mutants, you need to be clear on your goal. Do you want to test the efficiency of the testers, the accuracy of the test cases or the effectiveness of automated tests?

Saturday, August 5, 2023

Saturday, May 13, 2023

License Expired

In an amusing short video from CNN[1],

Alexei Navalny, a Russian opposition leader and anti-corruption activist,

explained the meme MOSCOW4. It is representative for the stupidity of Putin’s command

structure which - according to Navalny - consists of an array of complete morons.

He underlined the statement by naming an example with one of them who was

hacked his email passwords several times in sequence. The first password was “Moscow1”,

then “Moscow2”, etc.

In an amusing short video from CNN[1],

Alexei Navalny, a Russian opposition leader and anti-corruption activist,

explained the meme MOSCOW4. It is representative for the stupidity of Putin’s command

structure which - according to Navalny - consists of an array of complete morons.

He underlined the statement by naming an example with one of them who was

hacked his email passwords several times in sequence. The first password was “Moscow1”,

then “Moscow2”, etc.

After we ourselves managed several times to forget updating expiring license keys for our customers, I remembered this story. We are not any better and I thought, it was about time to honour our repetitive mishap with a corresponding cartoon. For the dinosaurs, I was experimenting with a different kind of filling grey; something Gary Larson had in his cartoons, too.

[1] https://edition.cnn.com/videos/world/2022/04/19/navalny-moscow-4-origseriesfilms-3.cnn

Sunday, March 12, 2023

Lindy's Law in Test Automation

by T. J. Zelger, March 12, 2023

When I developed my first "robot" 20 years ago with the goal to automatically test the software so that my team didn't have to do the same manual tests every day, there were at most a handful of products enabling it. There were tools from well-known companies like IBM and Mercury (now HP), and these were extremely expensive. You didn't have much choice. Once you made a decision for a tool, it was almost impossible to revert that decision and go for another. It inevitably resulted in enormous extra costs.

A little later, a few interesting and cheaper alternatives emerged, such as RANOREX, an Austrian company that soon taught the big ones to fear because of their quality and an attractive value for money.

We also experimented with other products that we used for specific tasks and later replaced with other newer/better ones. Among these, I had a Canadian product on my focus which provided us with valuable services when testing a vehicle valuation calculation engine. As far as I know, the product no longer exists today and my memories are patchy, but I believe it was a forerunner of one of the open source systems that are widely used today or it may have been something similar.

20 years later, you will find a flood of even cheaper or free offers. A closer look reveals that most products are based on a few identical core modules. Selenium is currently one of the most popular "engines" on which most tools are based on. I also use Selenium, and because it's actually just an "engine", you have to develop additional methods and modules on top of it to make it a stable and easy to maintain test automation suite.

Nowadays, when building your own framework, most stick to the page-object model. However, we used to have a different approach. Our test data and instructions (action words) were kept in Excel. The idea was to enable testers with no programming skills to write automed tests. At the beginning we even thought, we could convince our business-analyts to maintain their own tests.

The idea to keep data and keywords out of the code was not new. It already existed at the time I was still working with IBM Rational Robot. For example, the SAFS Framework by Carl Nagle [1] was the first framework I learnt about following a similar approach or take TestFrame which is an implementation of Hans Buwalda's so called "Action Words" [2].

Our Excel based framework was quite a success within our headquarters in Switzerland and Germany and our plan to have testers write their own scripts without programming skills went well, but the maintenance of our framework wasn't quite that easy and it needed an expert to maintain all the UI locators and required extensions. This was sometimes a little too tricky for the non-techies. And, we never managed the business analysts to go for it.

Later (in a different company), I used the same approach but realized that it didn't have the same effect if you have testers WITH programming skills. Excel isn't seen cool enough to write automated tests and if you sell such approach to techies, they will raise their eye brows.

So, we removed the Excel part and integrated everything into NUNIT. That was easier to debug also.

And now? A newcomer called Cypress enters the market [3]. As I don't want to get stuck in sweet idleness, we are now starting a new adventure to see what it has to offer. We still keep our Selenium scripts, but they are going into a maintenance mode right now.

But, do we really have to follow each new fashion trend? Who guarantees that new stuff and ideas are really better than what we already have in place?

Fortunately, in my position as QA Manager, I can mostly set the goals myself in this area. If things are going well, you have the dilemma between "don't touch a running system" and ensuring we are not missing something.

Today, we use Selenium/C# with NUNIT for automated UI tests triggered daily by Jenkins. And, we have an automated test suite that fires requests on an interface level (below the UI) following the Test Automation Pyramid approach [4].

The problem: Everything works smooth since years! Why should I spend time to investigate alternatives?

I am thinking here of the Lindy's Law [5]. If something has proven itself for a long time, there is a high probability that it will continue to prove itself in the future. In my case, this applies in particular to these automated API tests, which are based on a framework we developed ourselves with the aim to keep the test scripts at the highest possible level of abstraction. The technical details such as authentication communication with the backend remain hidden. Also, instead of just having JSON based input/output, we are dealing with deserialized business objects.

We have at least 3000 automated tests that have identified bugs that were not caught in the deveoper's unit-tests. Simply said, these interface tests are a success story and I don't spend a second thinking about replacing it with a standard product. Why should we scan the market for "better" stuff that maybe isn't?

Because the examination of alternatives does not necessarily lead to the replacement of a tried and tested system. It can become a supplier of interesting and new ideas and extensions for the existing solution. Being open minded also helps recognizing the limits of one's own system and thus to check whether the current version can last not only in the near, but also far future and/or can be supplemented with one or the other useful feature.

The only constraint I am dealing with in this regard is my available time. But that's another story.

References:

[1] SAFS, Carl Nagle

[2] "Action Words" by Hans Buwalda, Software Test Automation (Fewster/Graham), Addison-Wesley

[3] https://www.testautomatisierung.org/testautomatisierung-cypress-vs-selenium/

[4] Test Automation Pyramid, Fowler, https://martinfowler.com/articles/practical-test-pyramid.html

[5] Lindy's Law by Albert Goldman, 1964, https://www.sciencedirect.com/science/article/abs/pii/S0378437117305964 and "Das Magazin", Nr. 10, 10-11. März 2023

Saturday, March 4, 2023

Saturday, February 4, 2023

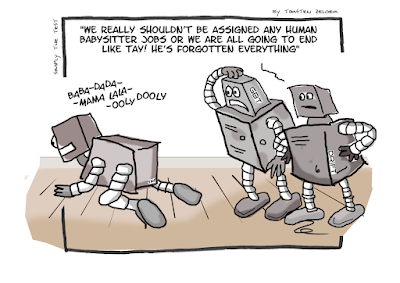

AI Adventures in Babysitting

I recently stumbled over an article about Microsoft's chatbot Tay which - after only 24 hours "training" turned into a more than questionable little "monster". The article was the inspiration for this one cartoon.

I recently stumbled over an article about Microsoft's chatbot Tay which - after only 24 hours "training" turned into a more than questionable little "monster". The article was the inspiration for this one cartoon.

Saturday, January 14, 2023

Time to illuminate

A crisis is a productive state. You simply need to get rid of its aftertaste of catastrophe.

Max Frisch.